[continued from part II] This, my first Mac, consisted of: • a system unit with 128K of RAM, 64K of ROM containing the system toolbox and boot software, a 9″ black&white display (512×342 pixels), a small speaker, a 400K single-side 400K floppy disk drive, two serial ports using a new mini-DIN 8 pin connector DB9 connectors, a ball-based mouse also connected via DB9, and an integrated power supply; • a small keyboard with no cursor keys or numeric keyboard, connecting to the front of the system over a 4-pin phone connector; • a second 400K floppy drive, which connected to the back of the system; • an 80-column dot matrix Imagewriter II printer, connecting to one of the serial ports; • System 1 (though it wasn’t called that yet) on floppy disks with MacPaint on one, and MacWrite on the other; • a third-party 512K RAM expansion board which fit somewhat precariously over the motherboard but worked well enough; (this RAM upgrade board, from Beck-Tech, was actually 1024K and I now remember buying it a year later) • a boxy carrying case where everything but the printer would fit — I didn’t buy Apple’s version, though. I went to Berkeley and bought it together with a BMUG membership and a box of user group software; • a poster with the detailed schematics of both Mac boards (motherboard and power supply); • a special tool which had a long Torx-15 hex key on one end and a spreading tool on the other end. The Mac’s rather soft plastic was easily marred by anything else; • The very first version of Steve Jasik’s MacNosy disassembler software. All this cost almost $4000 but it was worth every cent. (Also see the wonderful teardown by iFixit.) Taking it back to Brazil proved to be quite an ordeal, however. We had made arrangements to get my suitcase unopened through customs, but at the last minute I was advised to skip my scheduled flight and come in the next day. We hadn’t considered the fact that the 1984 Olympics were happening in LA that month, and getting onto the next flight in front of a huge waiting list of people was, of course, “impossible”! As they say, necessity is the mother of invention and I promptly told one of the nice VARIG attendants that I would miss my wedding if she didn’t do something — anything! She promised to try her utmost and early the next morning she slipped me a boarding pass in the best undercover agent manner. And her colleagues on board made quite a fuss about getting the best snacks for “the bridegroom”… 😉 Anyway, after that everything went well and I arrived safe and sound with my system. More on what we did with it in part IV.

Browsing Posts in Hardware

[continued from part I]

In 1983 I’d started working at a Brazilian microcomputer company, Quartzil. They already had the QI800 on the market, a simple CP/M-80 computer (using the Z80 CPU and 8″, 243K diskettes) and wanted to expand their market share by doing something innovative. I was responsible for the system software and was asked for my opinion about what a new system should do and look like. We already had all read about the Apple Lisa and about the very recent IBM PC which used an Intel 8088 CPU.

After some wild ideas about making a modular system with interchangeable CPUs, with optional Z80, 8008 and 68000 CPU boards, we realized that it would be too expensive — none the least, because it would have needed a large bus connector that was not available in Brazil, and would be hard to import. (The previous QI800 used the S100 bus, so called because of its 100-pin bus; since by a happy coincidence the middle 12 pins were unused, they had put in two 44-pin connectors which were much cheaper.)

Just after the Mac came out in early 1984 we began considering the idea of cloning it. We ultimately decided the project would be too expensive, and soon we learned that another company — Unitron — was trying that angle already.

Cloning issues in Brazil at that time are mostly forgotten and misunderstood today, and merit a full book! Briefly, the government tried to “protect” Brazilian computer companies by not allowing anything containing a microprocessor chip to be imported; the hope was that the local industry would invest and build their own chips, development machines and, ultimately, a strong local market. What legislators didn’t understand was that it was a very difficult and high-capital undertaking. To make things more complex, the same companies they were trying to protect were hampered by regulations and had to resort to all sorts of tricks; for instance, our request to import an HP logic analyzer to debug the boards turned out to take 3 years (!) to process; by the time the response arrived, we already had bought one on the gray market.

Since, theoretically, the Brazilian market was entirely separate from the rest of the world, and the concept of international intellectual property was in its infancy, cloning was completely legal. In fact, there were already over a dozen clones of the Apple II on the market and selling quite well! This was, of course, helped by Apple publishing their schematics. A few others were trying their hands at cloning the PC and found it harder to do; this was before the first independent BIOS was developed.

To get back to the topic, it was decided to send me to the NCC/84 computer conference in Las Vegas to see what was coming on the market in the US and to buy a Mac to, if nothing else, help us in the development process. (In fact, it turned out to be extremely useful — I used it to write all documentation and also to write some auxiliary development software for our new system.)

It was a wonderful deal for me. The company paid my plane tickets and hotel, I paid for the Mac, we all learned a lot. I also took advantage of the trip to polish my English, as up to that point I’d never had occasion to speak it.

The NCC was a huge conference and, frankly, I don’t remember many details. I do remember seeing from afar an absurdly young-looking Steve Jobs, in suit and tie, meeting with some bigwigs inside the big, glassed-in Apple booth. I collected a lot of swag, brochures and technical material; together with a huge weight of books and magazines, that meant that I had to divide it into boxes and ship all but the most pertinent stuff back home separately. I think it all amounted to about 120Kg of paper, meaning several painful trips to the nearest post office.

The most important space in my suitcase was, of course, reserved for the complete Mac 128 system and peripherals. More about that in the upcoming part III.

30 years ago, when the first issue of MacWorld Magazine came out – the classic cover with Steve Jobs and 3 Macs on the front – I already could look back at some years as an Apple user. In the early days of personal computers, the middle 1970’s, the first computer magazines appeared: Byte, Creative Computing, and several others. I read the debates about the first machines: the Altair and, later, the Apple II; the TRS80; the Commodore PET, and so forth.

It was immediately clear to me that I would need one of those early machines. I’d already been working with mainframes like the IBM/360 and Burroughs B6700, but those new microcomputers already had as much capacity as the first IBMs I’d programmed for, just 8 years later.

So as soon as possible I asked someone who knew someone who could bring in electronics from the USA. Importing these things was prohibited but there was a lively gray market and customs officials might conveniently look the other way at certain times. Anyway, sometime in 1979 I was the proud owner of an Apple II+ with 48K of RAM, a Phillips cassette recorder, and a small color TV with a hacked-together video input. (The TV didn’t really like having its inputs externally exposed and ultimately needed an isolating power transformer.)

The Apple II+ later grew to accomodate several accessory boards, dual floppy drives, a Z80 CPU board to run CP/M-80, as well as a switchable character generator ROM to show lower-case ASCII as well as accents and the special characters used by Gutenberg, one of the first word processors that used SGML markup – a predecessor of today’s XML and HTML. I also became a member of several local computer clubs and, together, we amassed a huge library of Apple II software; quite a feat, since you couldn’t directly import software or even send money to the USA for payment!

Hacking the Apple II’s hardware and software was fun and educative. There were few compilers and the OS was primitive compared the mainframe software I’d learned, but it was obvious that here was the future of computing.

There were two influential developments in the early 1980s: first, there was the Smalltalk issue of Byte Magazine in 1981; and then the introduction of the Apple Lisa in early 1983. Common to both was the black-on-white pixel-oriented display, which I later learned came from the Xerox Star, together with the use of a mouse, pull-down menus, and the flexible typography now familiar to everybody.

Needless to say, I read both of those magazines (and their follow-ups) uncounted times and analysed the screen pictures with great care. (I also bought as many of the classic Smalltalk books as I could get, though I never actually suceeded in getting a workable Smalltalk system running.)

So I can say I was thoroughly prepared when the first Mac 128K came out in early 1984. I practically memorized all articles written about it and in May 1984 I was in a store in Los Angeles – my first trip to the US! – buying a Mac 128K with all the optionals: external floppy, 3 boxes of 3.5″, 400K Sony diskettes and a 80-column Imagewriter printer. (The 132-column model wouldn’t fit into my suitcase.) Thanks to my reading I was able to operate it immediately, to the amazement of the store salesman.

More about this in the soon-to-follow second part of this post. Stay tuned.

This post has been updated several times (last update was on Feb.8, 2013); be sure to scroll to the end. Also see my final follow-up in 2014.

One central feature of any connector/plug is the pincount. The ubiquitous AC plugs we all know from an early age have 2 (or, more usually, 3) easily visible pins and of course the AC outlet is supposed to have the same number – and, intuitively, we know that the cable itself has the same number of wires. Depending on where you live, you may also be intimately familiar with adapters or conversion cables that have one type of plug on one end and a different type on the other. Here’s one AC adapter we’ve become used to here in Brazil, after the recent (and disastrous) change to the standard:

Even with such a simple adapter – if you open it, there’s just three metal strips connecting one side to the other – mistakes can be made. This specific brand’s design is faulty, assuming that the two AC pins are interchangeable. This is true for 220V, but in an area where 110V is used, neutral and hot pins will be reversed, which can be dangerous if you plug an older 3-pin appliance into such an adapter.

Still, my point here is that everybody is used to cables and adapters that are simple, inexpensive, and consist just of wires leading from one end to the other – after all, this is true for USB, Ethernet, FireWire, and so forth. Even things like DVI-VGA adapters seem to follow this pattern. But things have been getting more complicated lately. Even HDMI cables, which have no active components anywhere, transmit data at such speeds that careful shielding is necessary, and cable prices have stayed relatively high; if you get a cheap cable, you may find out that it doesn’t work well (or at all).

The recent Thunderbolt cables show the new trend. Thunderbolt has two full-duplex 10Gbps data paths and a low-speed control path. This means that you need two high-speed driver chips on each end of the cable (one next to the connector, one in the plug). This means that these cables sell in the $50 price range, and it will take a long time for prices to drop even slightly.

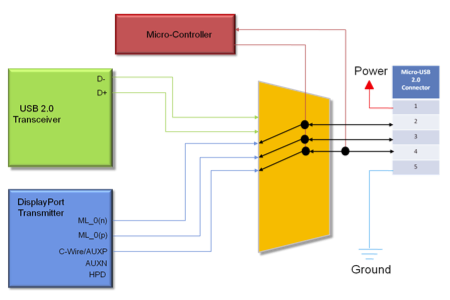

DisplayPort is an interesting case; it has 1-4 data paths that can run at 1.3 to 4.3Gbps, and a control path. The original connector had limited adoption and when Apple came out with their smaller mini version, it was quickly incorporated into the standard, and also reused for Thunderbolt. An even smaller version, called MyDP, is due soon. Analogix recently came out with an implementation of MyDP which they call SlimPort. MyDP is intended for mobile devices and squeezes one of the high-speed paths and the control paths down to 5 pins, allowing it to use a 5-pin micro-USB connector. Here’s a diagram of the architecture on the device side:

If you read the documentation carefully, right inside the micro-USB plug you need a special converter chip which converts those 3 signals to HDMI, and from then on, up to the other end of the cable, you have shielded HDMI wire pairs and a HDMI connector. Of course, this means that you can’t judge that cable by the 5 pins on one end, nor can you say that that specific implementation “transmits audio/video over USB”. It just repurposes the connector. Such a cable would, of course, be significantly more expensive to manufacture than the usual “wires all the way down” cable, and (because of the chip) even more than a standard HDMI cable.

Still referring to the diagram above, if you substitute the blue box (DisplayPort Transmitter) for another labeled “MHL Transmitter”, you have the MHL architecture, although some implementations use an 11-pin connector. Common to both MHL and MyDP is the need for an additional transmitter (driver) chip as well as a switcher chip that goes back and forth between that and the USB transceivers. This, of course, implies additional space on the device board for these chips, traces and passive components, as well as increased power consumption. You can, of course, put in a micro-HDMI connector and drive that directly, that would save neither space nor power.

Is there another way to transmit audio/video over a standard USB implementation? There are device classes for that, but they’re mostly capable of low-bandwidth applications like webcams; at least for USB2. Ah, but what of USB3? That has serious bandwidth (5Gbps) that certainly can accommodate large-screen, quality video, as well as general high-speed data transfer – not up to Thunderbolt speeds, though. You need a USB3 transceiver chip in the blue box above, and no switcher chip; USB3 already has a dedicated pin pair for legacy USB2 compatibility. All that’s needed is the necessary bandwidth on the device itself; and here’s where things start to get complicated again.

You see, there’s serious optimization already going on between the processor and display controller – in fact, all that is on a single chip, the SoC (System-on-a-Chip), labelled A6 in the iFixit teardown. Generating video signals in some standard mode and pulling it out of the SoC needs only a few added pins. If you go the extra trouble to also incorporate a USB3 driver on the SoC and a fast buffer RAM to handle burst transfers of data packets, the SoC can certainly implement the USB3 protocols. But – and that’s the problem – unlike video, that data doesn’t come at predictable times from predictable places. USB requires software to handle the various protocol layers, and between that and the necessity to, at some point, read or write that data to and from Flash memory, you run into speed limits which make it unlikely that full USB3 speeds can be handled by current implementations.

But, even so, let’s assume, for the sake of argument, that the A6 does implement all this and that both it and the Flash memory can manage USB3 speeds. Will, then, a Lightning-to-USB3 cable come out soon? Is that even possible? (You probably were wondering when I would get around to mentioning Lightning…)

Here’s where the old “wires-all-the-way-down” reflexes kick in, at least if you’re not a hardware engineer. To quote from that link:

Although it’s clear at this point that the iPhone 5 only sports USB 2.0 speeds, initial discussions of Lightning’s support of USB 3.0 have focused on its pin count—the USB “Super Speed” 3.0 spec requires nine pins to function, and Lightning connectors only have eight.

…The Lightning connector itself has two divots on either side for retention, but these extra electrical connections in the receptacle could possibly be used as a ground return, which would bring the number of Lightning pins to the same count as that of USB 3.0—nine total.

(…followed, in the comments, by discussions of shields and ground returns and…)

Of course, that contains the following failed assumptions (beyond what I just mentioned):

- Lightning is just a USB3 interface in disguise, and

- Cables and connectors are always wired straight-through, at most with a shield around the cable.

- If there are any chips in the connector, they must be sinister authentication chips!

These assumptions also underlie the oft-cited intention of “waiting for the $1 cables/adapters”. But, recall that Apple specifically said that Lightning is an all-digital, adaptive interface. USB3 is not adaptive, although it can be called digital in that it has two digital signal paths implemented as differential pairs. If you abandon assumptions 1 and 2, assumption 3 becomes just silly. Remember, the SlimPort designers put a few simple digital signals on the connector and converted them – just a cm or so away – into another standards’ differential wire pairs by putting a chip inside the plug.

So, summing up all I said here and in my previous posts:

- Lightning is adaptive.

- All 8 pins are used for signals, and all or most can be switched to be used for power. So it makes no sense to say “Lightning is USB2-only” or whatever. (But see update#5, below.)

- The outer plug shell is used as ground reference and connected to the device shell.

- At least one (probably at most two) of the pins is used for detecting what sort of plug is plugged in.

- All plugs have to contain a controller/driver chip to implement the “adaptive” thing.

- The device watches for a momentary short on all pins (by the leading edge of the plug) to detect plug insertion/removal. (This has apparently been disproved by some cheap third-party plugs that don’t have a metal leading edge.)

- The pins on the plug are deactivated until after the plug is fully inserted, when a wake-up signal on one of the pins cues the chip inside the plug. This avoids any shorting hazard while the plug isn’t inside the connector.

- The controller/driver chip tells the device what type it is, and for cases like the Lightning-to-USB cable whether a charger (that sends power) or a device (that needs power) is on the other end.

- The device can then switch the other pins between the SoC’s data lines or the power circuitry, as needed in each case.

- Once everything is properly set up, the controller/driver chip gets digital signals from the SoC and converts them – via serial/parallel, ADC/DAC, differential drivers or whatever – to whatever is needed by the interface on the other end of the adapter or cable. It could even re-encode these signals to some other format to use fewer wires, gain noise-immunity or whatever, and re-decode them on the other end; it’s all flexible. It could even convert to optical.

I’ll be seriously surprised if even one of those points is not verified when the specs come out. And this is what is meant by “future-proof”. Re-using USB and micro-USB (or any existing standard) could never do any of that.

Update: just saw this article which purports to show the pinouts of the current Lightning-to-USB2 cable. “…dynamically assigns pins to allow for reversible use” is of course obvious, if you put together the “adaptive” and “reversible” points from this picture of the iPhone 5 event. Regarding the pinout they published, it’s not radially symmetrical as I thought it would be (except for one two pins), so I really would like a confirmation from some site like iFixit (I hear they’ll do a teardown soon). They also say:

Dynamic pin assignment performed by the iPhone 5 could also help explain the inclusion of authentication chips within Lighting cables. The chip is located between the V+ contact of the USB and the power pin of the Lightning plug.

I really see no justification for the “authentication chip” hypothesis, and even their diagram doesn’t show any single “power pin of the Lightning plug”. It’s clear that, once the cable’s type has been negotiated with the device, and the device has checked if there’s a charger, a peripheral or a computer on the other end, the power input from the USB side is switched to however many pins are required to carry the available current.

Update#2: I was alerted to this post, which states:

The iPhone 5 switches on by itself, even when the USB end [of the Lightning-to-USB cable] is not plugged in.

Hm. This would lend weight to my statement that a configuration protocol between device and Lightning plug runs just after plug-in – after all, such a protocol wouldn’t work with the device powered off. It also means that the protocol is implemented in software on the device side; otherwise they could just run it silently, until it really appears that the entire device needs to power up.

Still, there’s the question of what happens when the device battery is entirely discharged. I suppose there’s some sort of fallback circuit that allows the device to be powered up from the charger in that case.

Finally, I’ve just visited an Apple Store where I could get my first look at an iPhone 5. The plug is really very tiny but looks solid.

Update#3: yet another article reviving the authentication chip rumor. Recall how a similar flap about authentication chips in Apple’s headphone cables was finally put to rest? It’s the same thing; the chip in headphones simply implemented Apple’s signalling protocol to control iPods from the headphone cable controls. The chip in the Lightning connector simply implements Apple’s connector recognition protocol and switches charging/supply current.

Apple is building these chips in quantity for their own use and will probably make them available to qualified MFi program participants at cost – after all, it’s in their interest to make accessories widely available, not “restrain availability”.

Now, we hear that “only Apple-approved manufacturing facilities will be allowed to produce Lightning connector accessories”. That makes sense in that manufacturing tolerances on the new connector seem to be very tight and critical. Apple certainly wouldn’t want cheap knock-offs of the connector causing shorts, seating loosely or implementing the recognition protocol in a wrong way; this would reflect badly on the devices themselves, just as with apps. Think of this as the App Store for accessory manufacturers. 🙂

Update#4: new articles have come out with more information, confirming my reasoning.

The folks at Chipworks has done a more professional teardown, revealing that the connector contains, as expected, a couple of power-switching/regulating chips, as well as a previously unknown TI BQ2025 chip, which appears to contain a small amount of EPROM and implements some additional logic, power-switching, and TI’s SDQ serial signalling interface. SDQ also uses CRC checking on the message packets, so a CRC generator would be on the chip. Somewhat confusingly, Chipworks refer to CRC as a “security feature”, perhaps trying to tie into the authentication angle, but of course any serial protocol has some sort of CRC checking just to discard packets corrupted by noise.

Anandtech has additional information:

Apple calls Lightning an “adaptive” interface, and what this really means are different connectors with different chips inside for negotiating appropriate I/O from the host device. The way this works is that Apple sells MFi members Lightning connectors which they build into their device, and at present those come in 4 different signaling configurations with 2 physical models. There’s USB Host, USB Device, Serial, and charging only modes, and both a cable and dock variant with a metal support bracket, for a grand total of 8 different Lightning connector SKUs to choose from.

…Thus, the connector chip inside isn’t so much an “authenticator” but rather a negotiation aide to signal what is required from the host device.

Finally, there’s the iFixit iPod Nano 7th-gen teardown. What’s important here is that this is the thinnest device so far that uses Lightning, and it’s just 5.4mm (0.21″) thick. From the pictures you can see that devices can’t get much thinner without the connector thickness becoming the limiting factor.

Update#5: the Wikipedia article now shows a supposedly definite pin-out (and the iFixit iPhone 5 teardown links to that). Although I can’t find an independent source for the pin-out, it shows two identification pins, two differential data lanes, and a fixed power pin. Should this be confirmed it would mean that the connector is less adaptive in regarding to switching data and power pins; on the other hand, that pinout may well be just an indication of the default configuration for USB-type cables (that is, after the chips have negotiated the connection).

This is yet another follow-up to my posts about Apple’s new Lightning mobile connector.

The cool folks at iFixit have now published their comprehensive teardown of the iPhone 5. (Hopefully the other 2 new devices will also be done soon.)

Here’s a detail view of the Lightning connector inside the case: (click on all images to see the hires version from their site)

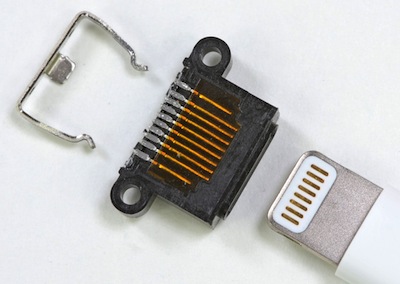

Notice two screws securing the connector body to the device case, and the metal bracket that keeps the other end from flexing. Here’s a closeup of the disassembled connector and of the plug:

Remember that the inside of the case is milled out of a solid block of metal, so this design looks to be much less breakable than the old 30pin version – I’ve been told that the tab end of the plug also feels very sturdy. Here’s a close-up of both connectors:

The space savings are considerable. I read that Apple has no plans to do a dock, so this looks to be a third-party opportunity. The previous connector had no serious protection against flexing, so previous docks had to grip the back and bottom of the device, which also led to a profusion of plastic dock adapters; Lightning docks should be able to get away with just a simple generic back support.

Confirming the rest of my speculations regarding the “adaptive” part of the Lightning interface will have to wait until the specifications leak… stay tuned.

Update: just saw a report about a teardown of the Lightning plug: “Peter from Double Helix Cables took apart the Lightning connector and found inside what appear to be authentication chips. He found a chip located between the V+ contact of the USB and the power pin on the new Lightning plug.”

It’s interesting how people assume that Lightning is just a pin-compatible extension of USB (which also explains why they feel that a cable should cost only a dollar or two). Note also that nobody knows for sure yet which pin is “the power pin”. Unfortunately the picture is very unclear ; it seems that there are three chips and a few passive components, at least on that side. Which, of course, goes far to confirm my hypothesis that the cable contains circuitry to tell the device which sort of adapter/cable is connected, as well as signal drivers/conditioners for USB2 (for this specific cable). The large chip, if it is really “directly in the signal path of the V+ wire”, probably switches the charging current to the appropriate pins on the device, once the interface has adapted to the cable – all this “authentication chip” paranoia is just – paranoia.

Update#2: it says here: “Included in the new high-tech part is a unique design which the analyst says is likely to feature a pin-out with four contacts dedicated to data, two for accessories, one for power and a ground. Two of the data transmission pins may be reserved for future input/output technology like USB 3.0 or perhaps even Thunderbolt, though this is merely speculation.”

See what I mean about people thinking that all pins are equal? What do they think that “adaptive” means, anyway?

Update#3: John Siracusa and Dan Benjamin agreed with my points in their latest talk show (references start just after the 49:00 mark) and they even sorta pronounced my name right. Thanks guys! 😉

Update#4: found a good discussion of the Lightning (published over a month ago!), with somewhat blurry pictures of a disassembled Lightning plug. They seem to match well with the linked pictures in the first update, above.

Update#5: my final summary. Please comment there, comments here are now closed.

This is a follow-up to my post about Apple’s new Lightning mobile connector. Thanks to all who linked or commented.

Apple has since published mechanical drawings of the iPhone 5, iPod nano 7th gen, and iPod touch 5th gen. The nano’s drawing has the best illustration of the connector side:

Applying the known width of 10.02mm to the connector photos, it would seem that the projecting part of the plug is about 1.6mm thick and 6.9mm wide. It so happens that this matches closely the standard printed circuit board thickness of 63 mils (1.57mm). The conclusion is clear: the plug consists of a PCB with 8 contacts on each side in a protective metal cladding. The PCB inside the plug’s body will have components on each side; remember the Thunderbolt cable teardown?

The actual interface specifications are still not available to the public – in fact, I think the old 30-pin connector specs never were officially available. What information we have is reverse-engineered or leaked from companies that are in Apple’s MFi program. But connector trends are clear. Some of you may remember the old SCSI 50-pin and Centronics 36-pin connectors; both interface were comparatively low-speed parallel and were substituted by higher-speed USB and FireWire serial interfaces. Thunderbolt, for instance, despite its relatively numerous 20 pins, is a serial interface. It has two full-duplex, differential, 10Gbps data paths (meaning 2 pin for each of 4 transmit/receive pair) as well as two low-speed transmit/receive pins, a connector sensing pin, a power pin, and several ground/shield pins. This is still overkill for the new generation of mobile devices, hence Lightning, which has only 8 pins (plus the outer ground/shield) and, no doubt, lower speeds.

So what are the technical reasons to go with Yet Another Proprietary Connector? Apple has, of course, gone down that road so often that their engineers are well-aware of the tradeoffs involved. We’ve seen the same happen a year or two ago: instead of going to USB3 or eSATA for external devices, they developed Thunderbolt (together with Intel), which is both faster and much more flexible.

Many people complain that Apple should have chosen micro-USB instead of a proprietary connector. The micro-B connector is almost exactly the same size as the Lightning, though it has only 5 pins and is not reversible. The USB3 version of micro-B has 5 extra pins in a separate section and twice the width of the USB2 version. USB3 has actually two different pin groups: one implements the USB2 standard while 2 extra signal pairs handle the new specification. As I mentioned before, usually the USB2 version has limited current carrying capability which would make it unsuitable for the upcoming Lightning iPad; the USB3 version has enough capability but is larger. Non-standard variations are already on the market, and yes, some connector manufacturers are now specifying higher current capacity on the two power pins. True, there are standards being implemented that expand these limits even more, but they’re not quite on the market yet.

Designing an interface means balancing many technical and design trade-offs, especially for a mobile device where device volume, battery capacity, thermal limitations, available PCB area, charging parameters and statistics of intended use all impose serious constraints. Let’s for the moment posit Apple had gone the micro-USB3 route. This would mean a dedicated USB3 host controller/transceiver chip like the TI TUSB1310A as well as several added passive components. This representative chip actually contains a secondary USB2 controller/transceiver for compatibility, has a peak power comsumption of nearly 0.5W while operational and requires 3 different power supplies. The chip alone is over 12x12mm square. More important, Apple’s SoC (system-on-a-chip) design means that their main chip has to implement the transceivers’ dual data interface and various control signals; several dozen of the chip’s 175 pins would connect to the SoC, needing additional PCB space.

In contrast, support for Lightning will probably need no extra chips and less than a dozen extra pins on the SoC; 8 of these will go straight to the connector. One or two of the pins will probably sense which kind of adapter or charger/cable is connected and the others will go, in parallel, to the power controller – switching them will allow enough current for charging without overloading any particular pin. Any current-hungry drivers, signal converters and so forth wouldn’t on the motherboard at all but inside the plug itself, further reducing cost and power consumption for the bare device. The interface may even be self-clocking, with a clock signal routed to the connector whenever necessary, so future system could implement higher speeds.

All this flexibility means that, probably, the Lightning-to-USB2 cable included with the current devices has a driver/signal conditioner chip embedded inside the plug. Any standard charger may be used but Apple’s charger already has a way of signaling its capabilities to the device. The Lightning-to-30pin adapters have, in addition, at least a stereo D/A converter for line audio out and. Upcoming HDMI and VGA adapters will receive audio/video signals in serial form and convert them to the appropriate formats. Third parties can, should Apple’s software allow it, build audio inputs, digital samplers, video-in or medical instrumentation adapters. All this without impacting users who need none of these adapters, but just want to charge their device.

Could all this also be done with USB2 or 3? Well, in principle, yes, but at a cost. Other manufacturers are using, for instance, MHL, which passes audio/video over the USB connector. However, this simply repurposes the connector itself – you need a separate controller chip to generate the MHL signals while the USB controller is turned off, unless you make yet another non-standard plug. (Note that MHL adapters are in the same price range as Apple’s adapter, so nothing gained there either.)

Update: forgot to mention that, with the assumptions above, nothing but SoC speed restrictions preclude Apple from releasing a Lightning-to-USB3 cable in the future.

Update#2: my comments on the physical details of the connector.

Update#3: my final summary. Please comment there, comments here are now closed.

In keeping with recent meteorological themes – iCloud, Thunderbolt – yesterday Apple introduced the new “Lightning” connector for its mobile hardware. This will be the replacement for the venerable 30-pin dock connector introduced 9 years ago. I haven’t seen it in person yet, but here’s some speculation on how it may work.

First, here’s a composite image of the plug and of the new connector (which is, apparently, codenamed “hero”):

You can see that there are 8 pins and that the plug has a metallic tip which, from all accounts, serves as the neutral/return/ground. Since the plug is described as “reversible”, the same 8 pins are present on the other side and, internally to the shell, connected to the same wires. However inside the connector you can clearly see that the mating pins exists on one side only – presumably to reduce the internal height of the connector by a millimeter or two, at the expense of slightly better reliability and a doubled current capacity.

There are locking springs on the side of the connector that mate with the cavities on the plug, hold it in place, and serve as the ground terminal. The ground is connected before the signal pins to protect against static and the rounded metal at the tip (from the photos it seems to be slightly roughened) wipes against the mating pins to remove any dirt or oxide buildup. At the same, the pins on the connector (not on the plug!) are briefly shorted to ground when the plug is inserted or removed, alerting the sensing circuitry to that.

People keep asking why Apple didn’t opt for the micro-USB connector. The answer is simple: that connector isn’t smart enough. It has only 5 pins: +5V, Ground, 2 digital data pins, and a sense pin, so most of the dock connector functions wouldn’t work – only charging and syncing would. Also, the pins are so small that no current plug/connector manufacturer allows the 2A needed for iPad charging. Note that this refers to individual pins; I’ve been told that several devices manage to get around this by some trick or other, but I couldn’t find any standard for doing so.

This takes us back to the sensing circuitry referred to. If one of the pins is reserved for sensing – even if it is the “dumb” sensing type that Apple has used in the previous generation, using resistors to ground – and two pins are mapped directly to the 2 USB data pins [update: I now think such direct support is unlikely] whether the USB side is plugged to a charger or to a computer’s USB port, and the other 5 pins can be used for charging current without overloading any single pin.

This also explains the size (and price) of the Lightning-to-30 pin adapter. It has to demultiplex the new digital signals and generate most of the old 30-pin signals, including audio and serial transmit and receive. The adapter does say “video and iPod Out not supported”; I’m not sure if the latter refers to audio out, though I’m now informed that the latter exports the iPod interface to certain car dashboards.

It’s as yet unknown whether Lightning will, in the future, support the new USB 3.0 spec – the current Lightning to USB cable supports only USB 2.0. This would require 6 (instead of 2) data pins, which is well within the connector’s capabilities. But would the mobile device’s memory, CPU and system bus support the high transfer rate? My guess is, not currently. Time will tell.

Update: deleted the reference to “hero” (I didn’t know it’s designer’s jargon). Also, for completion, I just saw there’s a Lightning to micro-USB adapter for European users, where micro-USB is the standard.

Update#2: good article at Macworld about Lightning. Also, Dan Frakes confirms that Apple says audio input is not included in the 30-pin adapter.

Update#3: found out what “iPod out” means, and fixed the reference.

Update#4: I added to the micro-USB paragraph, above. Thanks to several high-profile references (The Loop, Business Insider, Ars Technica – who also credited my composite picture – and dozens of others), I saw a neat traffic spike here. WP Super Cache held up well. The comments on all those sites are – interesting. 🙂 Of course whoever is convinced that everything is a sinister conspiracy by Apple won’t convinced by any technical argument, and I want to restrict myself to the engineering aspects.

Update#5: more thoughts (and some corrections) in the follow-up post. Please read that first and then comment over there.

If you missed it, here’s part 1.

Now, as I said, hardware details are becoming interesting only to developers – and even we don’t need to care overly about what CPU we’re developing for, now that we’re used to both 32-bit and 64-bit, big-endian and little-endian machines. (Game developers and players, of course, are a different demographic.)

As Steve Jobs said, it’s all about the software now. Here, too, too much emphasis on feature details can be misleading. I don’t really care whether Apple copied the notification graphic from Android, or whether it was the other way around. What’s important is that user interfaces are evolving by cross-pollination from many sources, and this is particularly interesting regarding iOS and OS X (note that the “Mac” prefix seems to be on its way out).

The two operating systems have always have had the same underpinnings in BSD Unix/Darwin and in several higher layers like Cocoa and many of the various Core managers. In their new versions, APIs from one are appearing in the other, and UI aspects are similarly being interchanged; compare, for instance, the Lion LaunchPad against the iOS SpringBoard (informally known to iOS users as “the app screen”).

Apple is not “converging” OS X and iOS just for convergence’s sake. Although desktops, laptops, tablets, phones and music players are all just “devices” now, the usage and form factor differences must be taken into account. Remember Apple’s 2×2 product matrix some years ago: desktops and laptops, consumer and pro machines? It hasn’t shown up lately, and we really need a new matrix; the new one should probably mobile and fixed, keyboard and touchscreen.

Don’t be misled by appearances! Yes, the LaunchPad looks like SpringBoard, but that doesn’t mean that we’ll have touchscreen desktops soon – rather, both interfaces are, in fact, a consequence of the respective App Store, being an easy way to show downloaded apps to the lay user. Apple is, however, exploring gesture-based interfaces and no doubt we’ll see the current gestures evolving into a universal set employed on all devices, the same way common keyboard shortcuts have becoming engrained. A common thread here is that hardware advances like touchpads, denser and thinner screens, better batteries and faster connections are becoming the main innovation drivers technologies, like processor speed and storage size used to be.

A subtle and very Apple-like aspect of this sort of convergence has become visible when the iPad came out. While some scoffed that the iPad was “just a larger iPod Touch”, in fact the iPod Touch had been, all the time, just a baby, trial-size version of the iPad! The Touch, the iPhone, and even the older iPods were an admirable way of getting the public used to keyboard less interfaces, and the iTunes Store was a similar precursor to the App Store. This means that when the iPad came out there was a legion of users already trained to its concepts and interface; an excellent trick, and one that only Apple could pull off.

Now we see that, in a similar way, the iPad and its smaller siblings are preparing the general public to migrating to larger, more powerful, devices which look comfortingly similar in many ways. Few consumers think of their iPhones or iPods as computers, even though they’re as capable as the supercomputers of 15 or 20 years. Now that desktops and laptops are just devices – and you won’t need a so-called computer anymore to set up your smaller devices – very soon this new class of “devices with keyboards” won’t be thought of as computers either, and the term will be used only for servers and mainframes, as it was in the old days.

I, for one, welcome our new post-PC overlords… 🙂